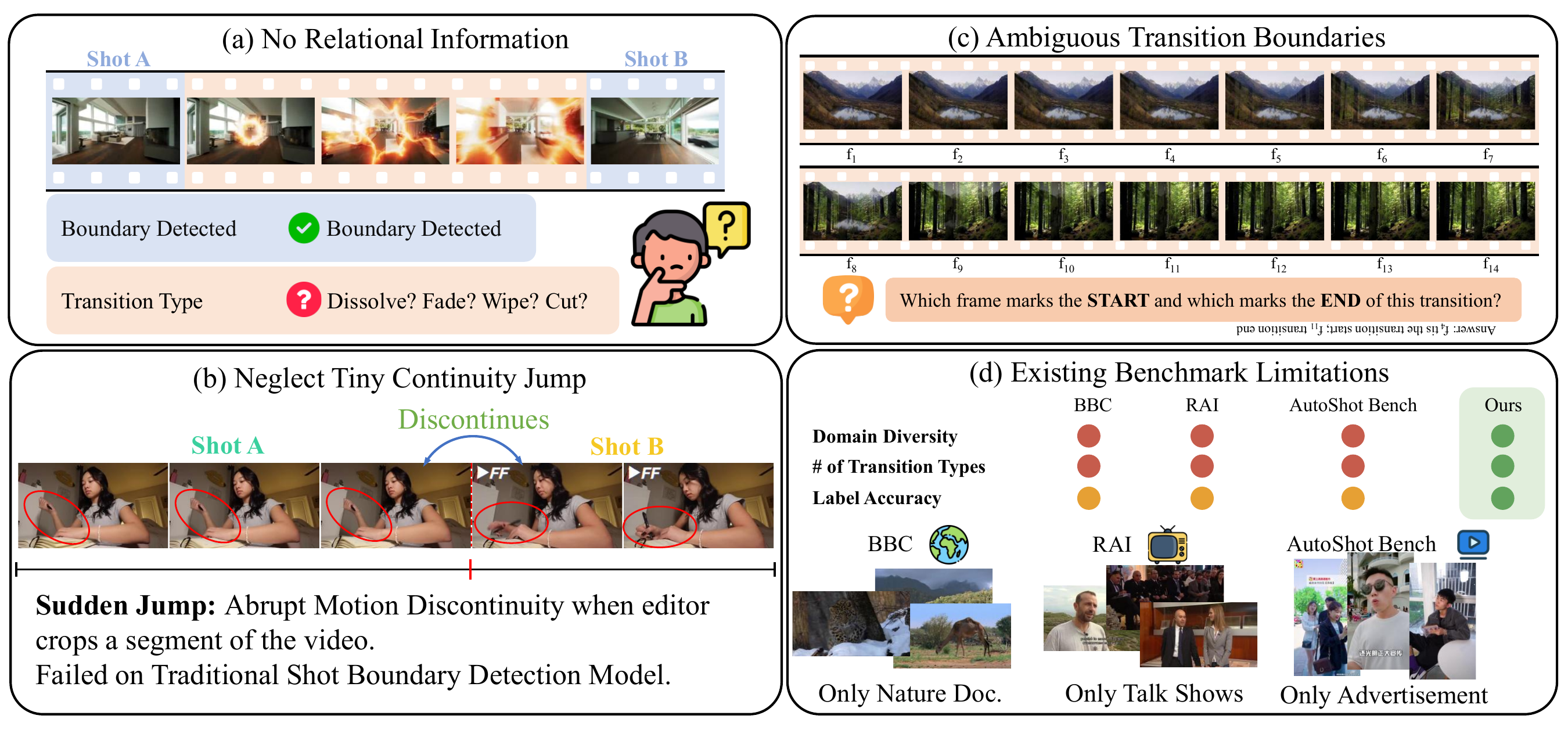

Limitations of traditional Shot Boundary Detection models.

(a) Detected Shots are hard to interpret: predicted boundaries lack explicit transition semantics;

(b) Sudden Jump is under-modeled and often missed;

(c) Human annotations are unreliable for gradual transition with subtle start/end frames;

(d) Existing benchmarks are outdated and have a narrow domain, failing to reflect modern internet editing diversity.

TL;DR We propose OmniShotCut, a shot query-based Transformer that detects shot boundaries while jointly predicting transition types and shot continuity relations, trained entirely on synthetic data.

Key Contributions

Reformulate SBD by enriching each shot with both intra-shot and inter-shot relational information, moving beyond simple temporal range prediction.

A shot query-based Transformer that jointly optimizes range prediction and relational classification within a unified hidden state.

A fully synthetic transition synthesis pipeline covering 9 types and 30 subtypes, scaling to 11.9M transitions without manual annotation.

A modern wide-domain benchmark sourced from YouTube, TikTok, and Bilibili for holistic and diagnostic SBD evaluation.

Shot Boundary Detection (SBD) automatically finds shot-change points to segment a video into coherent shots. While SBD was widely studied in the literature, existing state-of-the-art methods often produce non-interpretable boundaries on transitions, miss subtle yet harmful discontinuities, and rely on noisy, low-diversity annotations and outdated benchmarks. We propose OmniShotCut to formulate SBD as structured relational prediction, jointly estimating shot ranges with intra-shot relations and inter-shot relations, by a shot query-based dense video Transformer. To avoid imprecise manual labeling, we adopt a fully synthetic transition synthesis pipeline that automatically reproduces major transition families with precise boundaries and parameterized variants. We also introduce OmniShotCutBench, a modern wide-domain benchmark enabling holistic and diagnostic evaluation.

We define the problem as empowering traditional Shot Boundary Detection within an end-to-end model such that it not only predicts the temporal ranges of each shot, but also outputs the intra-relation classification of the shot itself and the inter-relation classification with respect to the previous shot.

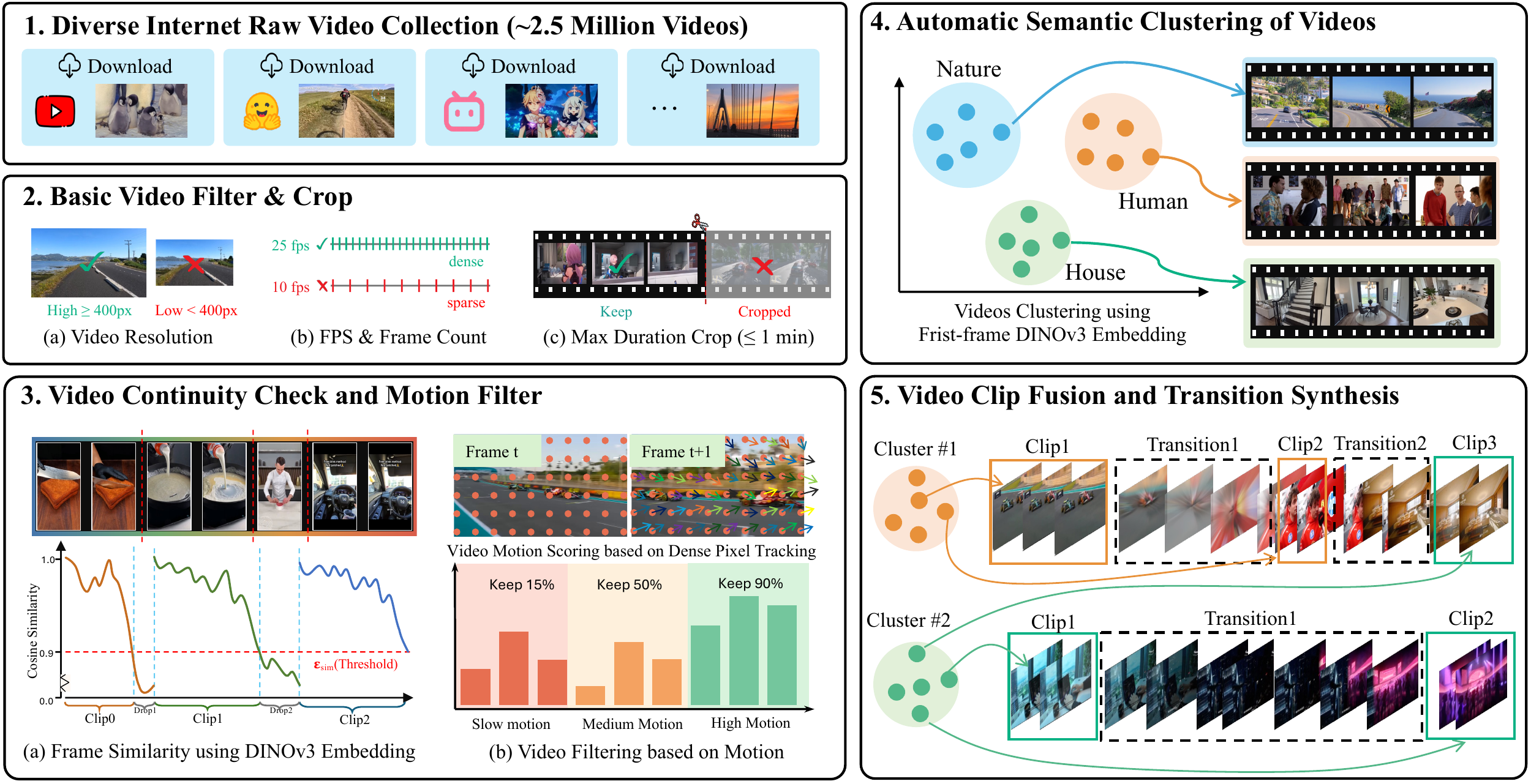

Video Curation. We collect ~2.5M raw internet videos and filter them by resolution, frame rate, and duration. Temporal continuity is verified via frame-level DINOv3 cosine similarity, and motion strength is estimated with dense tracking to select medium-motion clips suitable for sudden jump synthesis. The remaining clips are clustered into 27K semantic groups using SSL-based hierarchical K-means, yielding ~1.5M curated sources.

Transition Synthesis. Clip pairs are stitched with parameterized synthetic transitions spanning all 9 types and 30 subtypes. 75% of pairs are drawn from the same semantic cluster to simulate realistic content continuity; 25% are cross-cluster to reflect unpredictable real-world cuts. An additional 25% of the synthesis budget is allocated to short dense hard-cuts (<1 s) to match real-world editing distributions. The pipeline produces 300K training videos containing 11.9M labeled transitions in total.

Large-scale transition source video curation. (1) We collect ~2.5M raw videos from diverse Internet sources. (2) Videos are filtered based on resolution, frame rate, and duration constraints. (3) Temporal continuity and motion strength are verified using frame-level semantic similarity and dense motion tracking. (4) Remaining videos are automatically clustered using the SSL data curation method to group semantically similar videos. (5) Finally, video clips in the same and different clusters are fused to synthesize large-scale shot boundary detection training datasets.

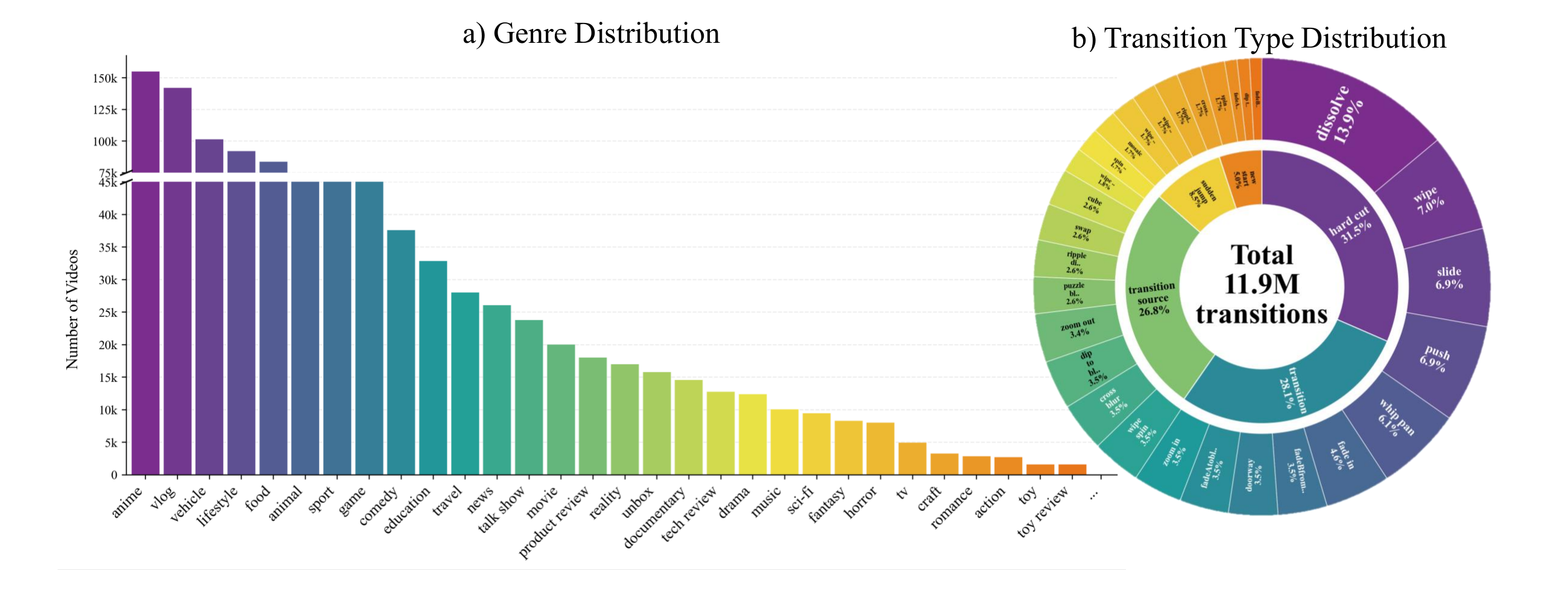

Left: Our curation pipeline scales to internet-scale, wide-domain video collection and yields ~1.5M curated clip source for transition synthesis; genres are annotated by Qwen3. Right: Transition statistics of the synthetic corpus. The inner ring shows the inter-shot relation distribution, and the outer ring breaks down all of the main and sub-transition types that our pipeline can synthesize. In total, we synthesize 11.9M transitions for training.

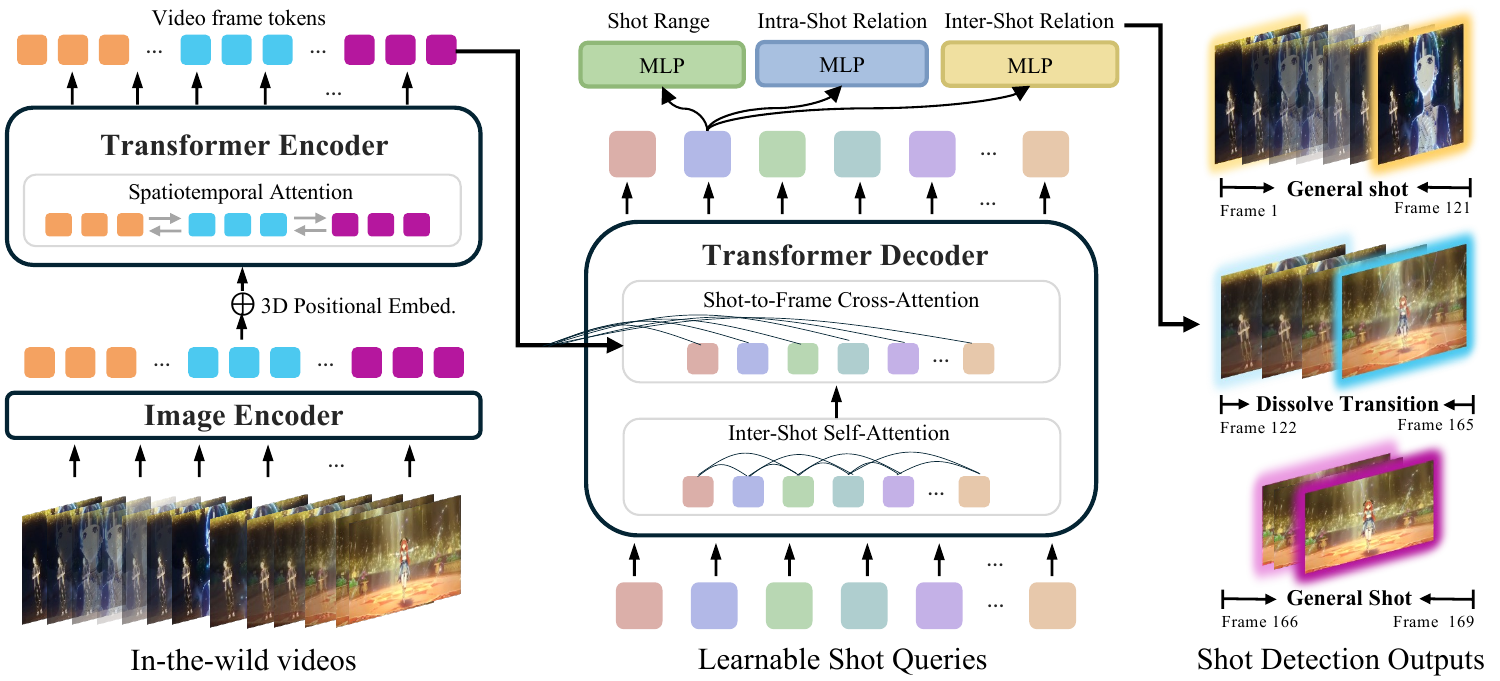

Our architecture is a Shot Query-based end-to-end video Transformer composed of an image encoder, a spatiotemporal Transformer encoder with 3D positional embedding, and a Transformer decoder. Each learnable shot query serves as a shot prediction slot, aggregating shot-specific evidence from video tokens through cross-attention. Three prediction heads — a range head, an intra-relation head, and an inter-relation head — produce all outputs jointly. Range prediction is formulated as discrete classification over frame indices, providing improved localization precision over regression-based approaches.

Shot Query-based Dense Video Transformer. Frame tokens from input videos are encoded using a spatiotemporal Transformer encoder with 3D positional embedding. Learnable shot queries in the decoder interact with frame features through cross-attention to predict shot range, intra-shot relation, and inter-shot relation.

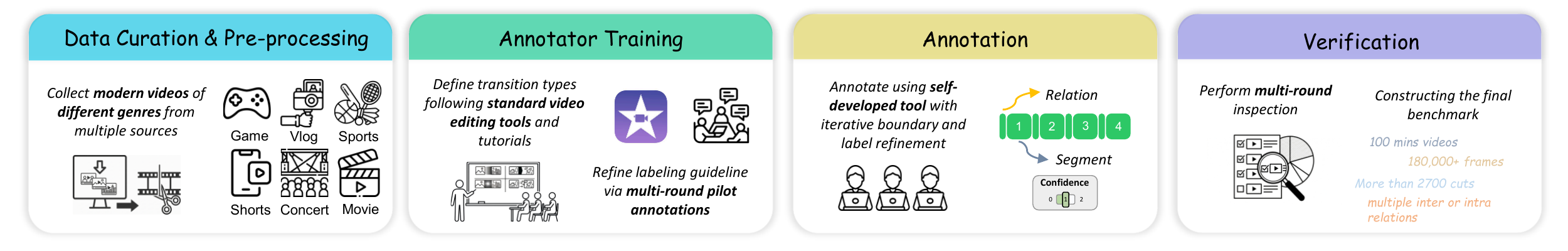

We introduce OmniShotCutBench, a modern shot boundary detection benchmark designed to comprehensively evaluate models' performance on versatile transitions from modern internet video sources. Videos are collected primarily from YouTube, TikTok, and Bilibili, covering 8 intra-shot relation categories and 4 inter-shot relation categories. In total, we curate 103 videos (~100 minutes) standardized to 480p at 30 FPS, providing holistic and diagnostic evaluation for contemporary editing styles. We will open-source this benchmark for future research.

An Overview of OmniShotCutBench Construction Pipeline.

@inproceedings{omnishotcut2026,

title = {OmniShotCut: Holistic Relational Shot Boundary Detection

with Shot-Query Transformer},

author = {Anonymous},

booktitle = {In Submission},

year = {2026},

}